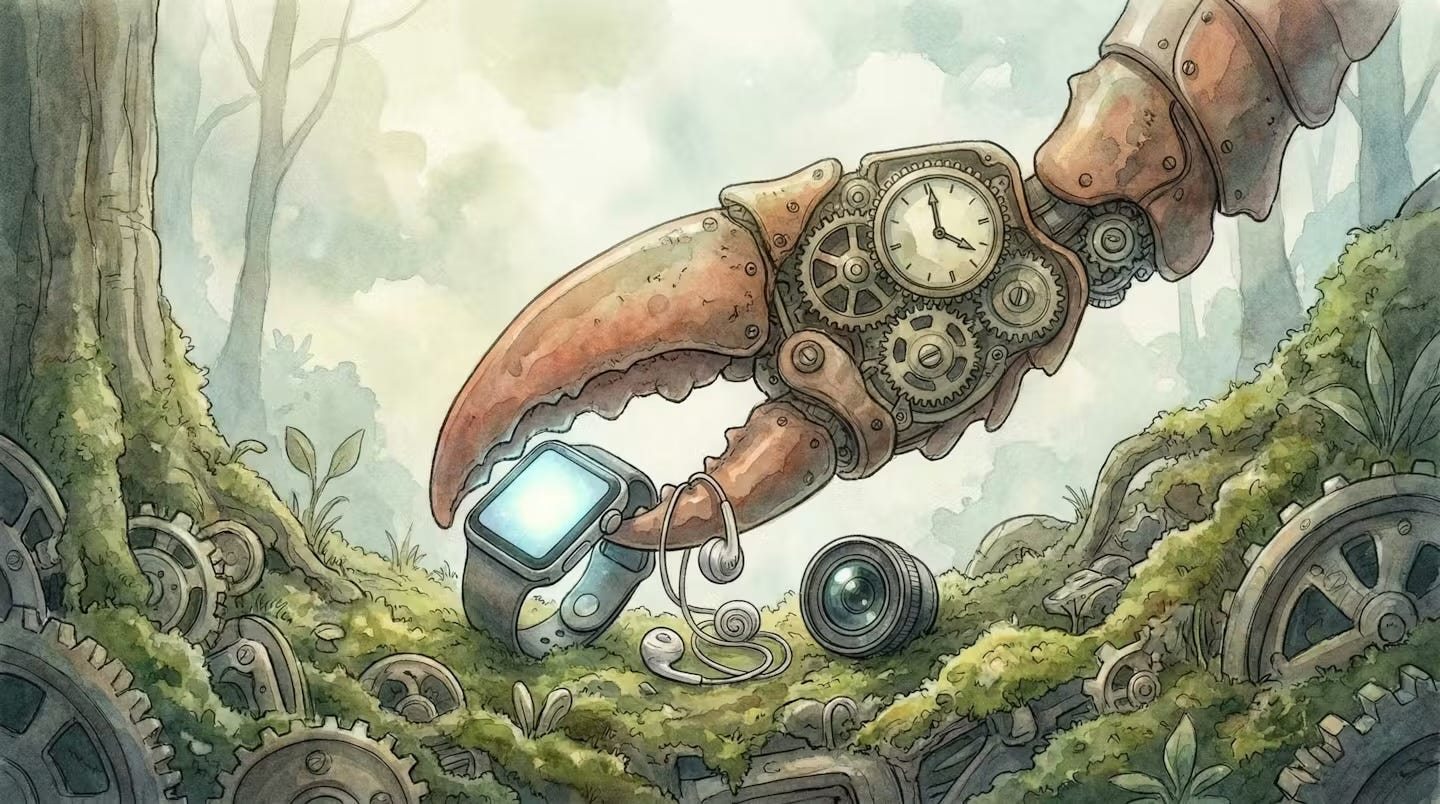

The Ideal Multimodal OpenClaw Gizmo

Three Devices You Already Own

By now, it feels like ancient history, but there have been numerous attempts to transform how we interact with AI through novel hardware. Remember the Humane AI Pin? The Rabbit R1? These gadgets promised to integrate large language models seamlessly into our daily lives with voice conversations, embedded cameras, and even the ability to project visuals onto our hands. The vision was compelling: a wearable AI assistant that doesn’t require a phone. The execution? Let’s just say the reviews were harsh.

Despite these setbacks, a consensus has emerged: smartphones, for all their versatility, may not be the ideal platform for multimodal AI. The phone is trapped behind a screen you must constantly unlock, navigate, and tap. The other prevailing view holds that the real future lies in mixed reality glasses, devices you’d wear all day, blending AI seamlessly with your visual field.

But that technology is not ready yet.

We’re asking for a device that’s as light as sunglasses, as powerful as the latest iPhone, capable of blending 3D objects with reality in real time, equipped with cameras and cellular connectivity, lasting all day on a single charge, and priced like a smartphone. The Apple Vision Pro, impressive as it is, remains a hefty “face brick” tethered to an external battery pack with a price tag exceeding $3,500. To achieve true practicality, mixed reality glasses would need to shrink to swim-goggle proportions, a leap that could be a decade away.

The Meta Ray-Ban Display Glasses were a pleasant surprise. Their form factor is reasonable enough to wear without looking like a cyborg cosplayer, and the functionality, while limited to a single overlay display rather than full 3D augmented reality, hints at what’s coming. But we’re not there yet.

So where does that leave us?

The Hardware Already in Your Drawer

Instead of waiting for the all-in-one device of our dreams, why not make the most of what we already own? Consider this combination:

A smartwatch with always-on connectivity and its screen for quick glances. Wireless earbuds with built-in microphones for voice interaction. A clip-on camera like the Insta360 Go or similar wearable recorder for visual context.

Together, these three devices form an ideal multimodal AI interface: display, audio, video capture. You already own at least two of them.

The missing ingredient was never the hardware. It was the software that could unify them.

Imagine you’re attempting a tricky recipe, hands covered in flour, when your clip-on camera catches you reaching for the salt. OpenClaw interrupts through your earbuds: “Hold on, the caramel hasn’t reached the right color yet, give it another forty seconds.” A timer appears on your watch. As you wait, you ask aloud what temperature the oven should be. It answers, then adds: “By the way, you’re almost out of vanilla extract. Want me to add it to your grocery list?“ Done.

What makes this interesting is that OpenClaw already has most of the ingredients, it only needs custom skills to orchestrate across those 3 devices.

The Implications

Here’s what the Humane AI Pin missed: the next breakthrough in AI interfaces may not come from new hardware. It will come from software that finally makes our existing hardware better integrated.

This has several implications worth considering:

First, it democratizes access. A smartwatch, earbuds, and a simple cam are exponentially cheaper than a dedicated AI wearable. If the interface is software, the hardware becomes commoditized.

Second, it creates optionality. Don’t like your earbuds? Swap them. Prefer a different smartwatch? Fine. OpenClaw is agnostic, it works across platforms, operating systems, and messaging channels.

Third, it inverts the innovation dynamic. Instead of waiting for Apple or Meta to ship perfect glasses, thousands of developers are building skills and integrations for OpenClaw right now. The pace of iteration is accelerating weekly.

The ‘Device’ of the Future is Already Here

A year ago, I would have imagined this article ending with a speculative call to action: someone should build the software that ties these devices together. Now that software exists, and it’s open source.

The ideal multimodal AI gizmo isn’t a single device. It’s a constellation of devices you already own, unified by an agent that can quickly figure out how to orchestrate them in creative ways.

The Humane AI Pin tried to replace your phone. OpenClaw doesn’t replace anything, it recruits everything.